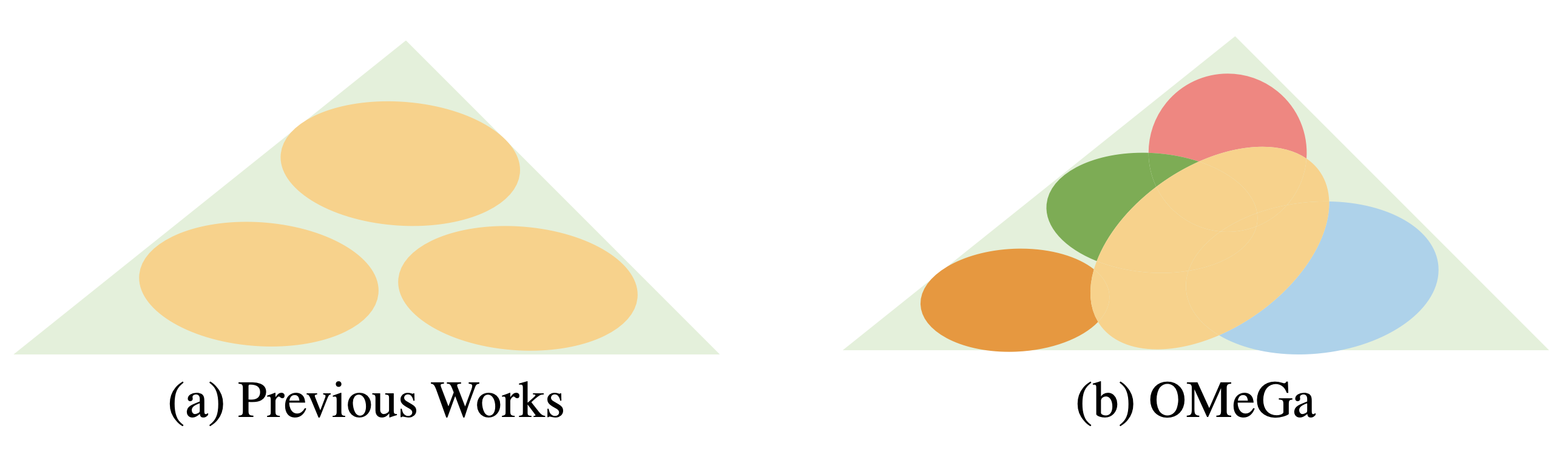

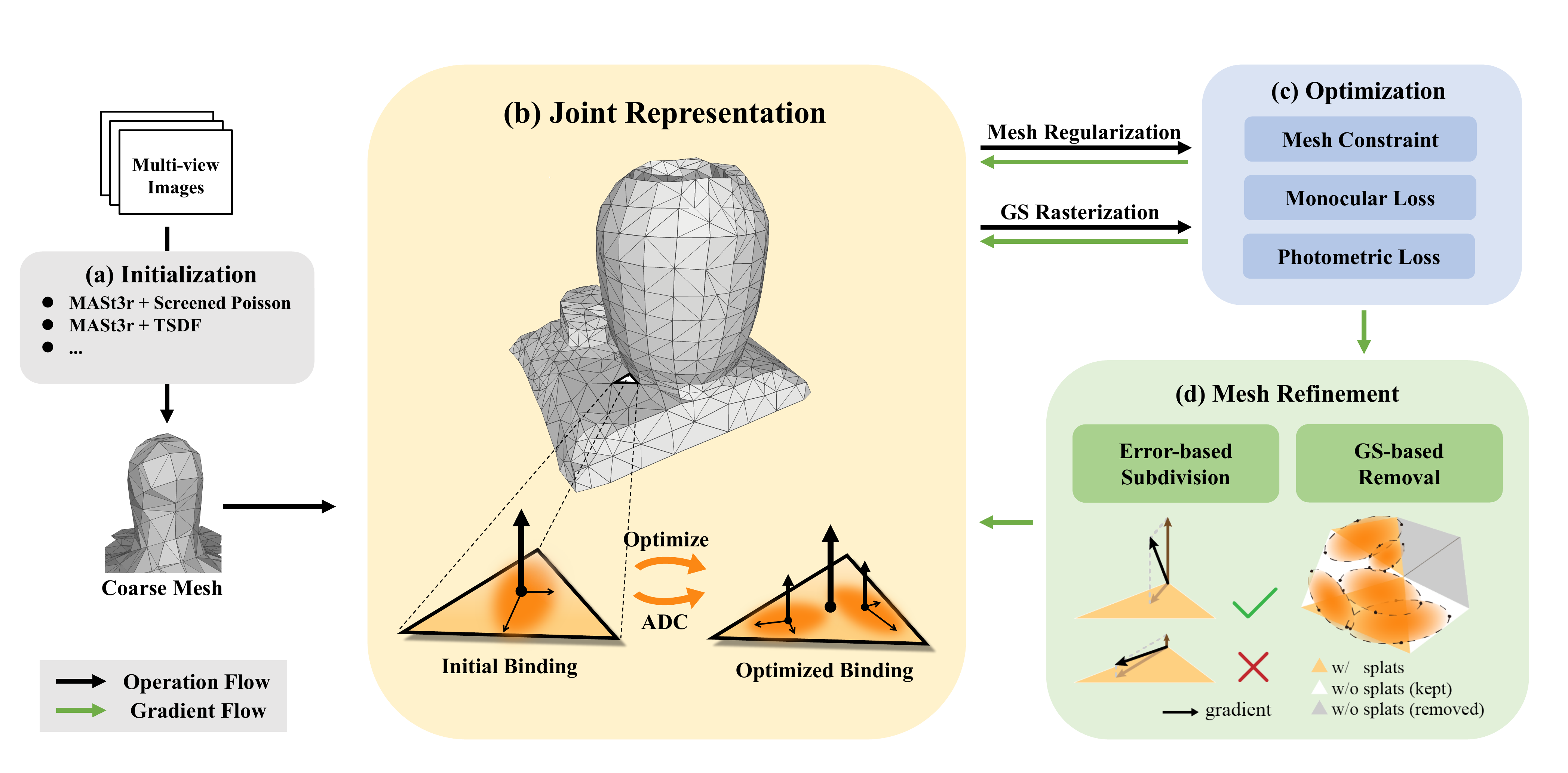

Our proposed OMeGa framework introduces a tightly-coupled optimization paradigm. The key technical contributions include:

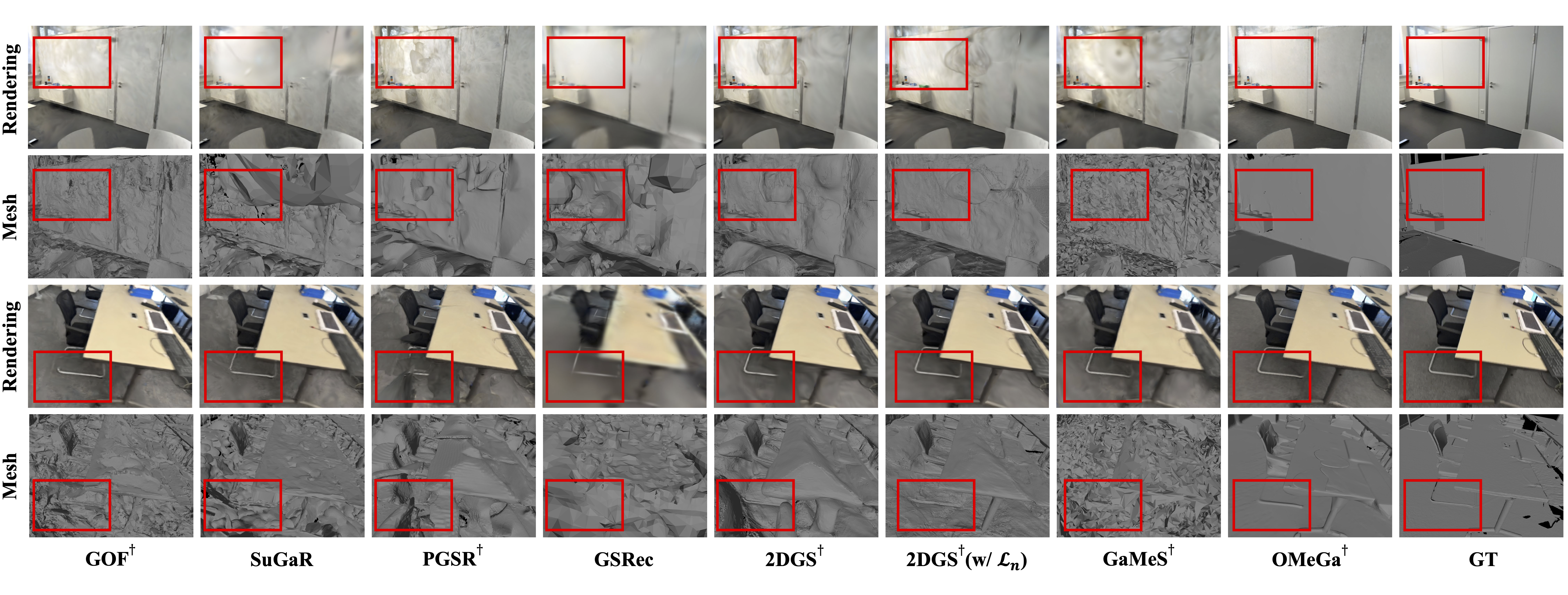

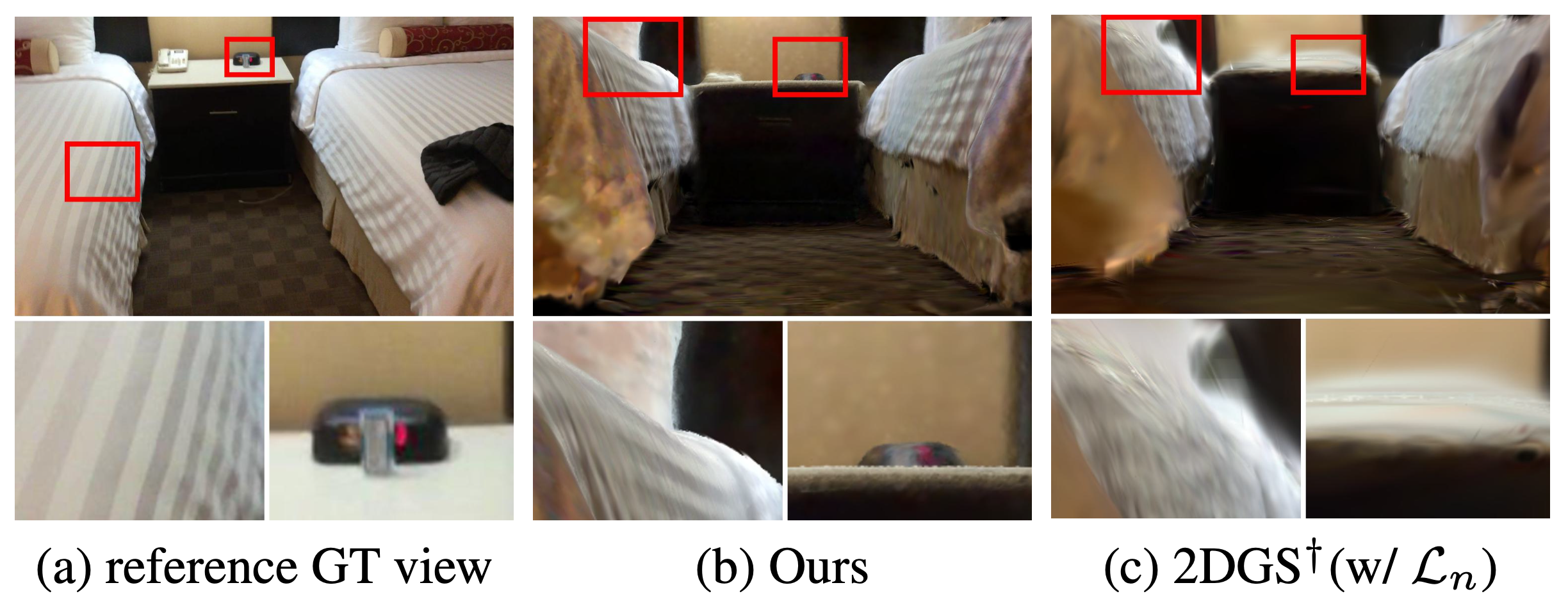

- End-to-end Joint Optimization: Unlike post-hoc mesh extraction methods, OMeGa directly and concurrently optimizes an explicit triangle mesh alongside 2D Gaussian splats.

- Flexible Binding Strategy: Spatial attributes of the splats are anchored to the mesh surface, ensuring geometric consistency, while texture attributes are retained on the splats to maintain high-fidelity rendering.

- Robust Geometry Regularization: We incorporate explicit mesh constraints (e.g., Laplacian smoothness) and monocular normal supervision directly into the pipeline to further inject geometric priors into the optimization.

- Heuristic Mesh Refinement: A heuristic, iterative strategy that automatically subdivides mesh faces with high errors and prunes unreliable faces, dynamically adapting the mesh topology to capture fine geometric details.